If you’re on twitter or otherwise follow Codinghorror, you know he suffered a complete loss of his blog.

My site and blog both have nightly database backups, but I’ve never tried to restore them, so they’re untested and therefore only slightly better than useless. I also get concerned sometimes that those backups are of a really old blogging engine’s data, an engine I’d never use again if I were to start from scratch or be forced to rebuild a server.

So, I figured it would be good to not only backup all the databases and code as usual, but also to crawl my site and my blog and snag the HTML and images directly. This would be helpful for a recovery, but may also come in handy when I convert my site over to Umbraco. I can always work with the rendered HTML when doing the conversion of some of the pages. Plus, I may have to go back some day and pull something I missed – long after I take the original site down.

Jon Galloway turned me on to WinHTTrack, a client application that will crawl whatever site you point it to, and download everything.

Don’t use this app for evil.

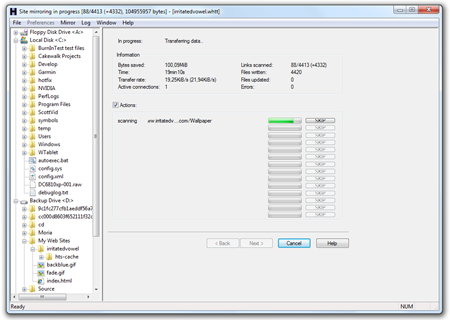

Here’s a screenshot of it early in the process of backing up my main site www.irritatedvowel.com (I’ll turn it on to my blog next)

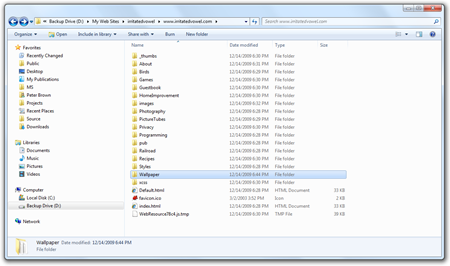

When WinHTTrack sucks down your site, it normalizes all the URLs so they work locally. You can set it to exclude certain file types, mime types and otherwise set a number of options to make it so you only back up what you’re interested in. The end result is a nice folder structure with client-usable HTML files

You can get WinHTTrack Web Copier here.

Now, of course, still back up your data, your templates, source files and all the things you’d need if you plan to reuse them or do an actual recovery of your site. This won’t get you there, but it will help you keep a technology-agnostic backup of your rendered content.